Programming

What is Apple's OpenELM?

Everything about apple's new Tech

25. 4. 2024

Natural language processing (NLP) capabilities are transforming the way we interact with our devices. Apple devices, known for their seamless user experiences, stand to gain significantly from integrating advanced open-source language models like OpenELM. In this article, we delve into custom OpenELM development specifically targeted for Apple devices, exploring the potential benefits, development considerations, and the expertise offered by Digital Trans4orMation s.r.o.

What is OpenELM?

OpenELM is a family of efficient language models (LLMs) that offer state-of-the-art performance with streamlined parameter allocation for optimized resource usage. CoreNet, a highly performant library, is used for its pre-training process. Importantly, OpenELM comes with both pre-trained and instruction-tuned models with varying sizes. This flexibility makes it adaptable for Apple devices, which often have varying computing capabilities.

Understanding OpenELM: Technical Foundations

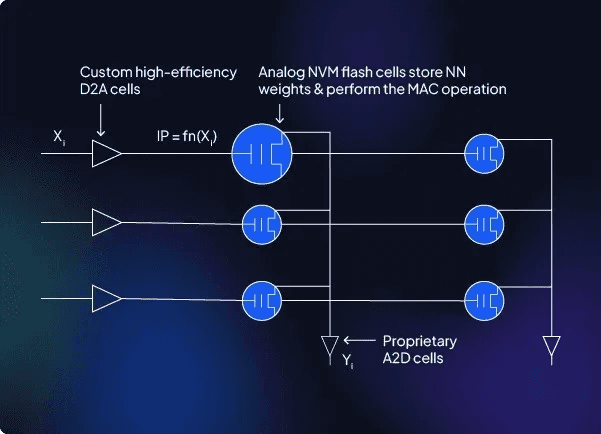

OpenELM's design paradigm sets it apart as a compelling choice for Apple devices. Its emphasis on layer-wise parameter scaling within the transformer architecture ensures optimized resource utilization. This directly addresses the core challenges of deploying sophisticated LLMs on Apple hardware, where the balance between computational power, battery life, and thermal management is paramount.

The decision to pre-train OpenELM models on a meticulously curated dataset using Apple's proprietary CoreNet library is a strategic masterstroke. CoreNet, specifically tuned for Apple silicon's Neural Engine and Metal Performance Shaders, offers significant inference speed advantages. This translates to a more responsive and power-efficient experience for end-users.

The spectrum of pre-trained and instruction-tuned OpenELM models demonstrates a deep understanding of Apple's diverse product ecosystem. This allows developers to meticulously tailor model selection for specific use cases and hardware profiles. Whether prioritizing ultra-low latency for on-device Siri interactions on an iPhone or maximizing generative capabilities on a high-end MacBook, OpenELM's adaptability is a tremendous asset.

The Rationale for Custom OpenELM Development on Apple Devices

While OpenELM models provide a strong foundation, customization unlocks their full potential within the Apple ecosystem. Here's why:

Domain-Specific and Localized Intelligence: The ability to fine-tune OpenELM models on specialized datasets, ranging from technical terminology to regional languages, can empower Apple's intelligent features such as Siri or translation services.

Hardware-Optimized Performance: Custom development ensures the intricate alignment of OpenELM models with Apple's proprietary hardware and software architecture. This tailoring translates into maximized responsiveness and efficient resource management, paramount for seamless user experiences.

Privacy-Centric Offline Functionality: Through meticulous customization, it's possible to engineer OpenELM models to operate offline. This enhances user privacy and ensures functionality even in scenarios with limited or unreliable network connectivity.

Unleashing App Innovation: Custom OpenELM integration serves as a catalyst for NLP-powered features within native Apple apps. More importantly, it grants third-party developers a powerful toolkit to build language-aware, intelligent app experiences with previously unattainable sophistication.

Illustrative Use Cases: Where Custom OpenELM Shines

Intelligent Text Composition and Editing: OpenELM's understanding of language can elevate predictive text suggestions far beyond simple autocompletion. Imagine advanced grammar correction, tone analysis, and contextually aware text generation that enhances creativity and writing efficiency.

Hyper-Responsive Virtual Assistants: Virtual assistants like Siri can become more conversational and informative. This could be achieved by custom OpenELM implementations that maintain contextual awareness, handle complex queries, and generate more natural, nuanced responses.

Advanced Summarization Capabilities: Applications that work with large bodies of text, such as notes, news aggregators, or research tools, can leverage OpenELM models to provide concise, insightful summaries. This can streamline workflows and help users quickly grasp the essence of information.

Unlocking App Innovation: Developers can tap into the power of OpenELM to build novel apps with features like intelligent code assistants, language-centric creative tools, or advanced customer support chatbots. The potential is virtually limitless.

Navigating Development Complexities

Hardware Heterogeneity: Apple devices span a range of hardware specifications. Strategies such as model quantization or selective deployment may be necessary to optimize performance across the device lineup.

Swift and Objective-C Proficiency: Fluency in Apple's primary programming languages is essential for seamless integration of OpenELM models into native iOS or iPadOS applications.

Apple Ecosystem Considerations: Developers must adhere to Apple's guidelines for on-device AI models, addressing potential limitations or platform-specific requirements for ensuring a smooth user experience.

Digital Trans4orMation s.r.o. Expert's on Apple Technologies

Digital Trans4orMation s.r.o. has been actively engaged with OpenELM since its release. This deep experience provides us with the expertise to support your custom OpenELM endeavors through:

Optimal Model Selection: Our team helps you identify the most appropriate OpenELM model or combination of models based on your application use case, performance targets, and hardware constraints.

Fine-Tuning and Optimization: We leverage our understanding of OpenELM to fine-tune models on your chosen datasets and optimize for Apple's hardware and software infrastructure.

Integration Expertise: We bridge the gap between model development and deployment, ensuring OpenELM capabilities are seamlessly integrated into new or existing iOS/iPadOS applications.

Performance Benchmarking and QA: We perform rigorous testing across a range of Apple devices to measure performance metrics and ensure a consistently delightful user experience.

written by: Matthew Drabek

For our Services, feel free to reach out to us via meeting…

Please share our content for further education